digiKam

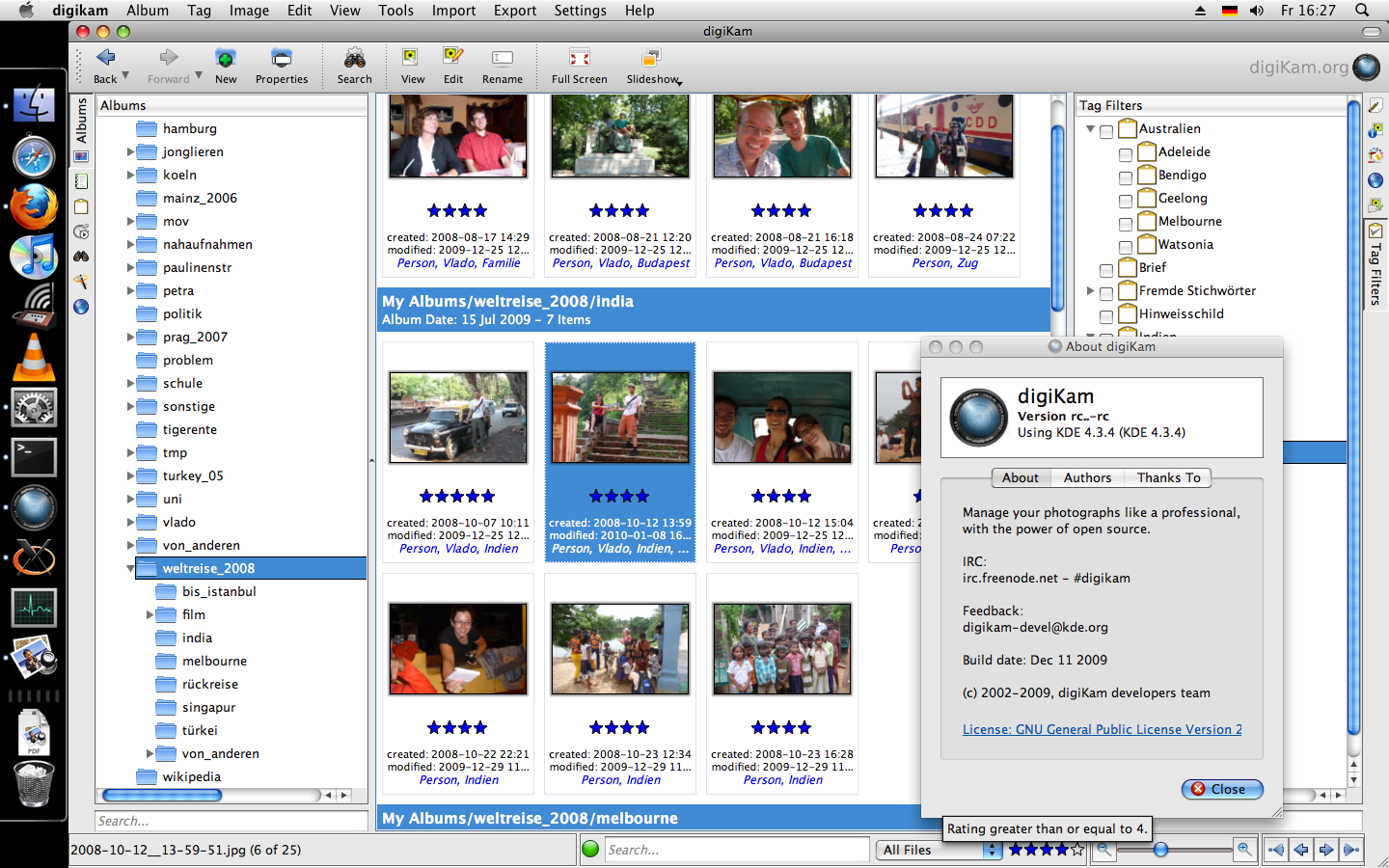

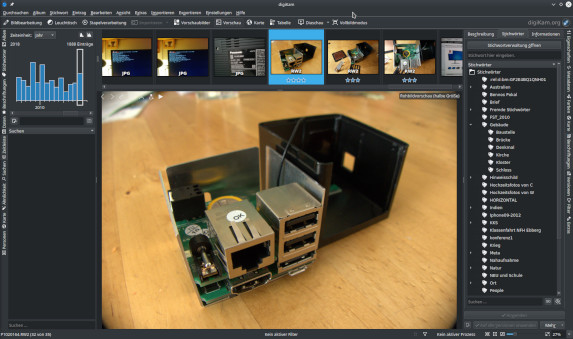

digiKam is my preferred Photo Library Management tool since 2009 – the best tool for this purpose I've ever seen! digiKam is a real free software development and was not available for Windows or Mac OS (without X Window System), when I first saw it. But for some years the possibilities, and maybe also the willingness, increased, to provide free software for the commercial operating systems as well.

The digiKam handbook with tips for Digital Asset Management is excellent too. The application is available in many languages (localisations), the handbook only in English.

Photos on network storage

Internally digiKam has long been using MySQL databases, to allow for quick searches and filtering. At least since 2016, with digiKam 5.0, it became relatively easy to use normal/ external MySQL databases, and since about that time I started experimenting with it. The main benefit for me was that I could now deposit my pictures on a network drive, and access them both from a desktop computer (faster, with a bigger monitor), and from a laptop (when laying on the sofa). In December 2018 there now is a detailed how-to Use digiKam with a NAS and MariaDB on the digiKam website, helping you set up something like this.

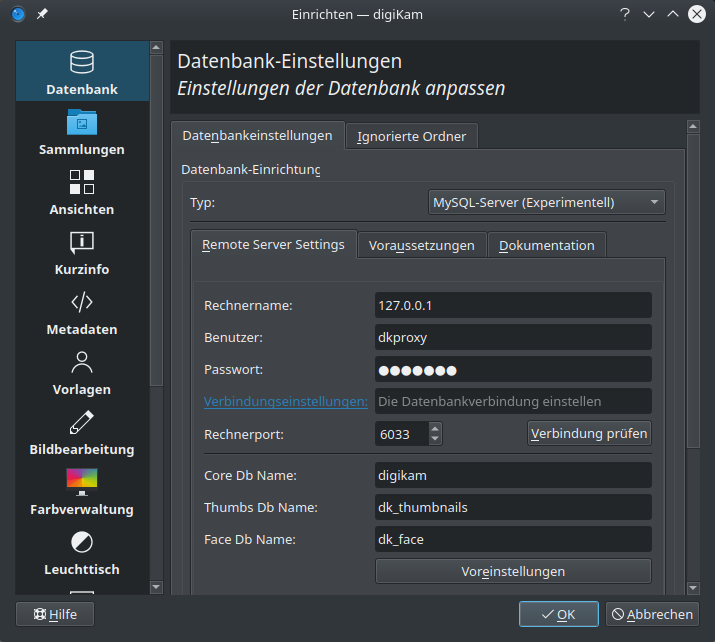

I was noticing how access to databases on the (local) network significantly slowed down digiKam, especially when accessing from my fanless ARM Chromebook running Arch Linux. That computer's wifi hardware performs much better with ChromeOS, than under “mainline” Linux (but only then can I use digiKam). With some consideration I found a somewhat complicated, yet practical solution to this problem: digiKam basically is using two databases, one for all picture metadata (ratings, descriptions, tags etc.), and one as a cache for the reduced thumbnail images (there is a third one, for face recognition, but so far I have not been using that feature). Only the thumbnail pictures use significant space (517M in my case), not the meta data (the database dump is just 13M). Unfortunately the two databases cannot be configured independently; there is just one setting for user, database, and host (i.e. the computer hosting the databases). At first I tried looking at the digiKam source code, to split the database configuration directly in digiKam. This turned out to be more complex than I had hoped it would be, so I'll start by describing a different solution, to then file a feature request to the digiKam devolopers, that would render my provisionary solution superfluous:

Split digiKam database queries between localhost (thumbnails) and network (metadata)

ProxySQL allows you to redirect a user's SQL queries to different SQL servers, by defining rules that match query patterns. In my digiKam usecase the rules are fairly simple, since all commands involving the thumbnails database dk_thumbnails should be redirected to a local MySQL server, and all calls to the digikam database redirected to the network server.

Still, the setup is time-consuming, and the complexity of at least two databases in two MySQL instances, in addition to the ProxySQL instance, can lead to confusion. I'm using MariaDB from the respective Linux distribution both an the network server (Debian), and on the clients (Ubuntu and Arch). A user for ProxySQL has to be created on all these databases, and given the correct access rights. On the local server:

CREATE USER 'dkproxy'@'localhost' IDENTIFIED BY 'secret_password'; CREATE DATABASE dk_thumbnails;

And on the network server:

CREATE USER 'dkproxy'@'%' IDENTIFIED BY 'secret_password'; CREATE DATABASE digikam;

I then filled the new databases with data I already had from my previous digiKam MySQL setup:

# mysql -p -u root dk_thumbnails < dk_thumbnails.sql

# mysql -p -u root digikam < digikam.sql

Now the dkproxy user needs access rights:

GRANT ALL ON dk_thumbnails.* TO 'dkproxy'@'localhost';

GRANT ALL ON digikam.* TO 'dkproxy'@'%';

I also created my dk_face database on the network server, since I'm not really using it, and it might be worth sharing it.

When configuring ProxySQL I sometimes accidentially logged in to MariaDB instead, or had trouble connecting to ProxySQL. Watch for the correct ports, and use 127.0.0.1 if localhost or hostnames don't work for you (as I experienced).

# mysql -u admin -p -h 127.0.0.1 -P6032 --prompt='Admin> '

Now create the user dkproxy, and the rules for redirecting queries (192.168.1.180 stands for the network server):

INSERT INTO mysql_users(username,password,default_hostgroup) VALUES ('dkproxy','secret_password', 1);

SAVE MYSQL USERS TO DISK; LOAD MYSQL USERS TO RUNTIME;

INSERT INTO mysql_servers(hostgroup_id,hostname,port) VALUES (0,'127.0.0.1',3306);

INSERT INTO mysql_servers(hostgroup_id,hostname,port) VALUES (1,'192.168.1.180',3306);

SAVE MYSQL SERVERS TO DISK; LOAD MYSQL SERVERS TO RUNTIME;

INSERT INTO mysql_query_rules (rule_id,active,username,schemaname,destination_hostgroup,apply) values (10,1,'dkproxy','dk_thumbnails',0,1);

INSERT INTO mysql_query_rules (rule_id,active,username,schemaname,destination_hostgroup,apply) values (20,1,'dkproxy','digikam',1,1);

INSERT INTO mysql_query_rules (rule_id,active,username,schemaname,destination_hostgroup,apply) values (21,1,'dkproxy','dk_face',1,1);

SAVE MYSQL QUERY RULES TO DISK; LOAD MYSQL QUERY RULES TO RUNTIME;

That should be all, if I did not forget anything. Again connecting to the ProxySQL admin interface you can easily see how well the rules are working:

Admin> SELECT hostgroup,srv_host,status,ConnFree,ConnOK,Queries,Bytes_data_sent,Bytes_data_recv,Latency_us FROM stats_mysql_connection_pool; +-----------+-----------------+----------+--------+----------+--------+---------+-----------------+-----------------+------------+ | hostgroup | srv_host | srv_port | status | ConnFree | ConnOK | Queries | Bytes_data_sent | Bytes_data_recv | Latency_us | +-----------+-----------------+----------+--------+----------+--------+---------+-----------------+-----------------+------------+ | 0 | 127.0.0.1 | 3306 | ONLINE | 1 | 1 | 34767 | 2128098 | 595568549 | 0 | | 1 | 192.168.1.180 | 3306 | ONLINE | 2 | 2 | 121759 | 3399218 | 5250558 | 380 | +-----------+-----------------+----------+--------+----------+--------+---------+-----------------+-----------------+------------+ 2 rows in set (0.00 sec)

From our local thumbnail host we got about 100 times as much data as from the general digiKam database, which is on the network. But most importantly: digiKam feels much faster again!

Update 2019-08: A digiKam bug tracker ticket THUMBDB : different database locations for thumbnails exists since 2013 - vote for it, if you're also interested in such a (faster) network setup!